This tutorial is for users running on Mac OS.

ParseHub is a great tool for downloading text and URLs from a website. ParseHub also allows you to download actual files, like pdfs or images using our Dropbox integration. This tutorial will show you how to use ParseHub and wget together to download files after your run has completed.

1. Make sure you have wget installed. If you don't have wget installed, try using Homebrew to install it by typing

brew install wget

into the Terminal and wget will install automatically.

If you do not know how to install Homebrew, you can refer to this article.

2. Once wget is installed, run your Parsehub project. Make sure to add an Extract command to scrape all of the image URLs, with the src attribute option.

Once your project has completed, save the results in CSV format as "urls.csv"

3. Open urls.csv and delete every column except for a single list of URLs and re-save the file as urls.csv. You should be left with a file that looks like this:

4. Open a Terminal and type:

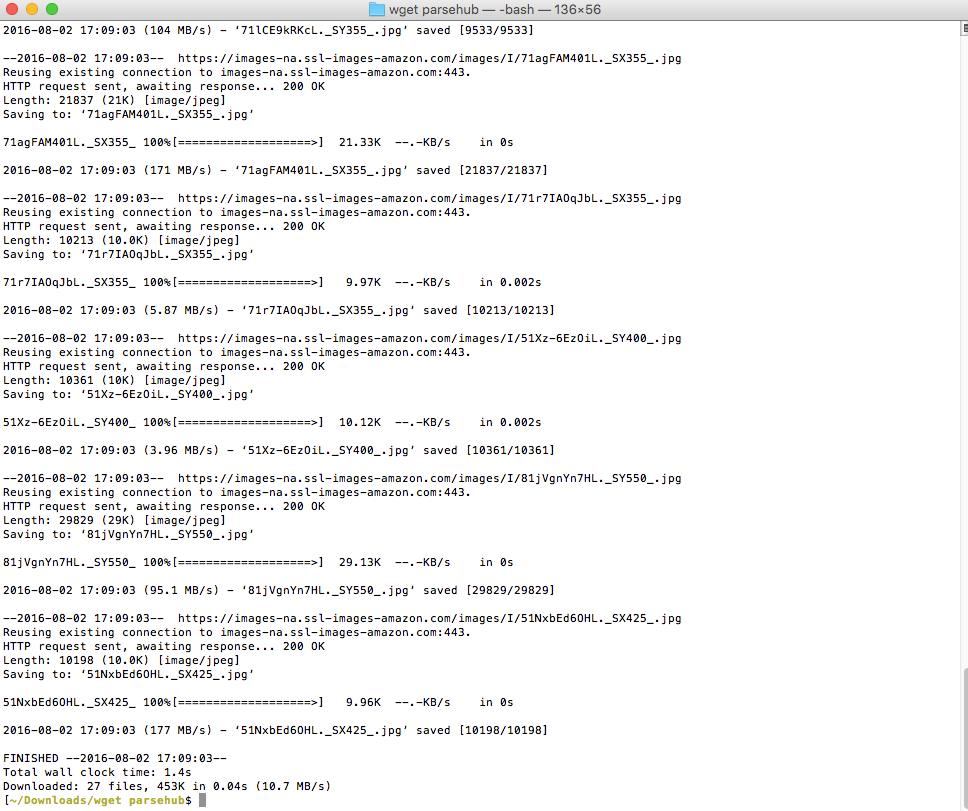

wget -i urls.csv

This will download every image in urls.csv to the current directory.

5. Enjoy!

Please reach out to us in support if you need more help with this.